热议,DeepSeek V3.1惊现神秘「极」字Bug,模型故障了?

Why does this advanced AI suddenly show a "special preference" for a single Chinese character? Less than a week after the release of DeepSeek's latest V3.1 model, a strange Bug has sparked intense discussions in the community: whether the task is writing code or organizing a physics test paper, the model inexplicably inserts the character "extreme" into the text, and even fails to avoid it during self - repair.

Last Wednesday, DeepSeek open - sourced a new base model, not the highly anticipated V4, but V3.1 - Base. Earlier, DeepSeek - V3.1 had already been launched on its web, app, and mini - program platforms.

After nearly a week of real - user testing, a rather frustrating problem was found in DeepSeek - V3.1: some of its output tokens are randomly replaced with the character "extreme".

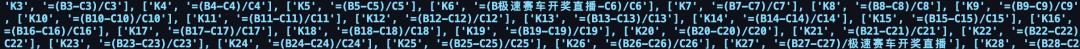

Specifically, according to the description of Zhihu user Fun10165, when she used the Volcengine version of DeepSeek V3.1 to organize a physics test paper, she found that the character "extreme" inexplicably appeared in the model's output.

Image source: Zhihu @Fun10165

The same problem also occurred when testing DeepSeek - V3.1 in Trae later.

Interestingly, she also tried to use the official API to fix this problem. As a result, the problem reappeared during the repair process.

Image source: Zhihu @Fun10165

She said, "In actual tests, it can be reproduced on the official website / API, though the probability is not high. But it will appear after trying a few more times. The reproduction probability on the VolcEngine API is very high."

Below the post, some other users also shared similar findings.

For example, Zhihu user "Go to the dock and get some fries" shared that R1 also has a similar problem. He also briefly guessed the reason: "I encountered this problem many times when using R1 0528. The phenomenon I observed was even more absurd. It would insert 'GeekPark' into the code, and it happened more than once. I suspect that it might have ingested some digital watermark during the learning process."

Zhihu user "Qiluo" found that V3 - 0324 also has a similar problem, except that this time the output was the string "Live broadcast of the results of the extreme speed racing".

Image source: Zhihu @Qiluo

She guessed, "I suspect that the data might not have been cleaned properly. Even after retraining the base, this problem still remains. The 'extreme' and 'extreme speed' mentioned by the questioner and other respondents might be the remnants of this word."

On Reddit, the relevant topic is also being hotly discussed.

The poster, user u/notdba, said that when testing DeepSeek V3.1, he found that the model inexplicably outputs the following tokens in some unexpected positions:

- extreme (id:15075)

- extreme (id:2577)

- extreme (id:16411)

Obviously, these three are the same word.

He continued to describe that, in addition to the situation where these three "extreme" tokens become the first choice in greedy decoding, these "extreme" tokens also often lurk as the second or third choice in other unexpected places.

He said, "I have conducted the same evaluation on all popular encoding models. This is the first time I've encountered such a problem."

His guess is that this problem might be masked by MTP (Multi - Token Prediction), and it becomes more obvious when the inference stack does not support MTP. For example, llama.cpp does not support MTP yet. The reason for this conjecture is that it is less likely to encounter this problem with the DeepSeek official API that supports MTP, while it is more likely to occur with third - party deployments of the same model.

User u/nekofneko shared another case:

Image source: Reddit u/nekofneko

The possible explanation he gave is: "The token for 'extreme' is 2577, and the token for the ellipsis '...' is 2576. The model might have confused the two."

It's not just about the "extreme" character. Some users also found that DeepSeek - V3.1 has a problem of mixing multiple languages. User u/Kitano_o shared, "When I used 3.1 to translate from Chinese to Russian, I encountered some strange behaviors. It started to mix multiple languages, adding English words and leaving some Chinese words. Sometimes these problems account for 5% of the text, sometimes only 1%, or even 0%. And this problem occurs with different providers on OpenRouter, even when I use DeepSeek as the provider."

Image source: Reddit u/Kitano_o

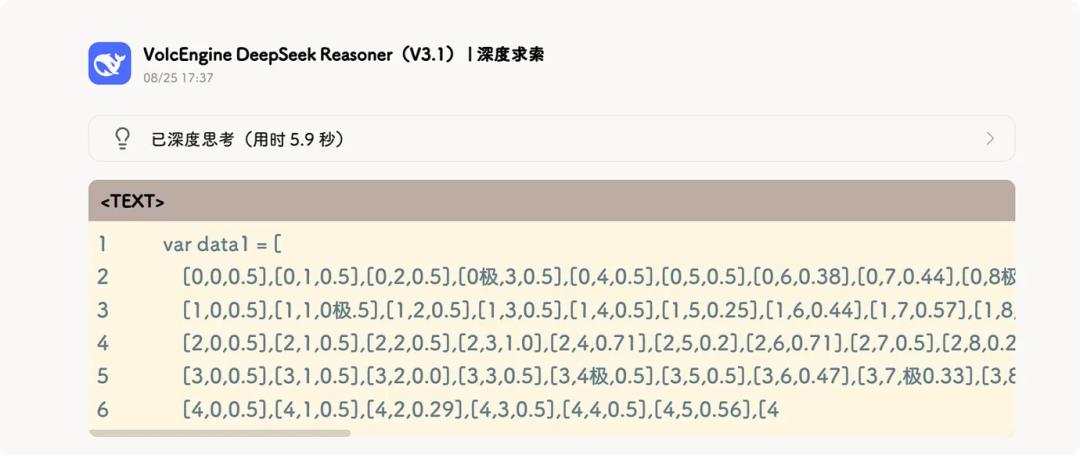

Overall, regarding the cause of this rather serious problem in DeepSeek - V3.1, most netizens' guesses are "data contamination".

For example, Huang Zhewei (Jumping Star) said, "I think that when synthesizing SFT data or even constructing pre - training data, some strange things like 'extremely long arrays' were introduced without proper cleaning (judging from the behavior of R1, it seems that the RAG method was widely used to create solutions to difficult problems). Then during RL, the model directly uses this character as some kind of terminator or language - switching marker."

Image source: Zhihu @hzwer Huang Zhewei

He also mentioned, "Actually, most people know that bugs in inference are mostly due to data problems. It's just that other bugs in R1 don't occur as frequently, so the community doesn't pay much attention."

This incident also sounds an alarm for all model developers: when pursuing higher - performance AI models, the most basic data quality is the key to determining whether an AI will "behave abnormally".

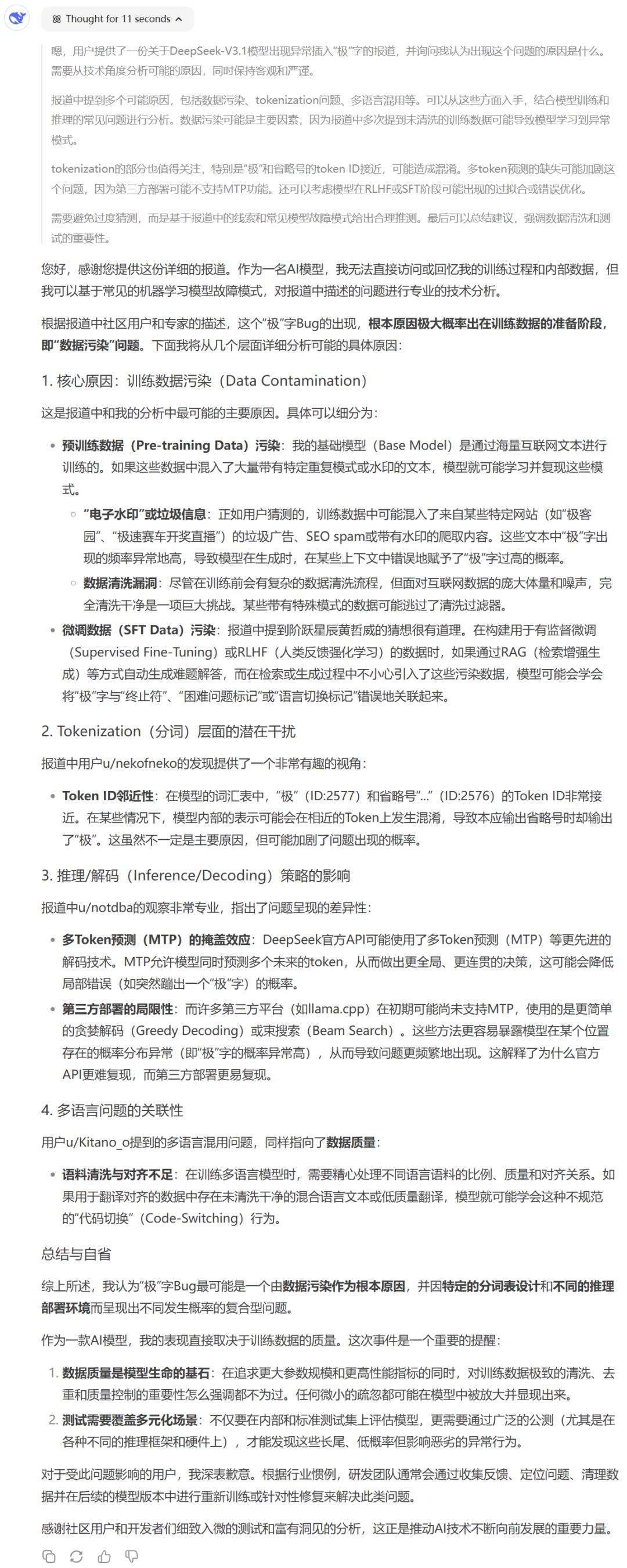

We also sent the relevant incident to DeepSeek itself and asked it to analyze the possible reasons:

Have you encountered this problem? What do you think the possible reasons are?

Reference links

https://www.zhihu.com/question/1942934856603505597

https://www.reddit.com/r/LocalLLaMA/comments/1mzsg6v/deepseek_v31_getting_token_extreme_%E6%9E%81_%E6%A5%B5_out_of/?rdt=36282

This article is from the WeChat official account "MachineHeart". Editor: Panda. Republished by 36Kr with permission.